Why would someone want to block search engines from indexing their website? Well, there could be several reasons, which are mainly up to the webmasters, but there’s one that’s very important.

I’ll cover that in this article, and I’ll also tell you how to stop search engines from crawling and indexing your WordPress site, using several methods.

When and why to stop search engines

Preventing search engines from crawling and indexing your site is one of the most important things to do after installing WordPress. You should really take this step when you’re starting to build your site.

A lot of people work directly on their live domain, not locally, so until they finish the project, things could get pretty messy. It’s not good if search engines index all that mess.

Everyone should make sure they start the right way and with proper SEO for their WordPress website.

It can take weeks until your site or parts of it get crawled and indexed, but sometimes it can take a few days, so you want to make sure that you block search engines from the start.

Search engines are constantly crawling the web, so it’s wrong to believe that you need backlinks or you need to submit your website to Bing and Google Webmaster Tools in order to get crawled and indexed.

How to block search engines

1. Enable a WordPress setting

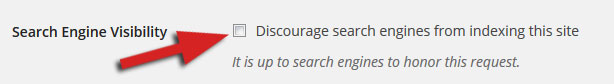

Go to Settings -> Reading, and enable “Discourage search engines from indexing this site”.

This adds the following code into your robots.txt file:

User-agent: *

Disallow: /

And it also adds this one <meta name='robots' content='noindex,follow' /> in your website’s header.

Note: If you somehow already have a robots.txt file, WordPress will not replace it or modify the code in it after you enable that setting.

Now, you might have noticed the note made by WordPress: “It is up to search engines to honor this request.”.

Even with the robots.txt and meta robots tag, search engines may still crawl and index parts of your site, so this method is not always 100% safe.

2. Password protect your website through cPanel

If you password-protect your website, you can be sure that search engines won’t index it, because web crawlers can’t access content in password-protected directories.

Let’s see how you can do this…

1. Log into your cPanel and look for Password Protected Directories:

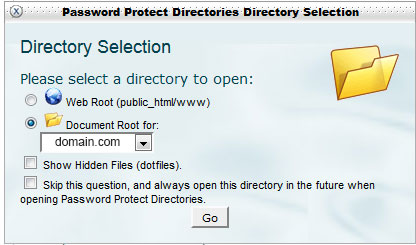

2. After you click on it, a pop-up will appear, where you have to select the document root:

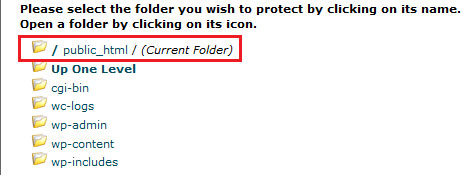

3. Press Go and then select the folder where your WordPress is installed (usually public_html):

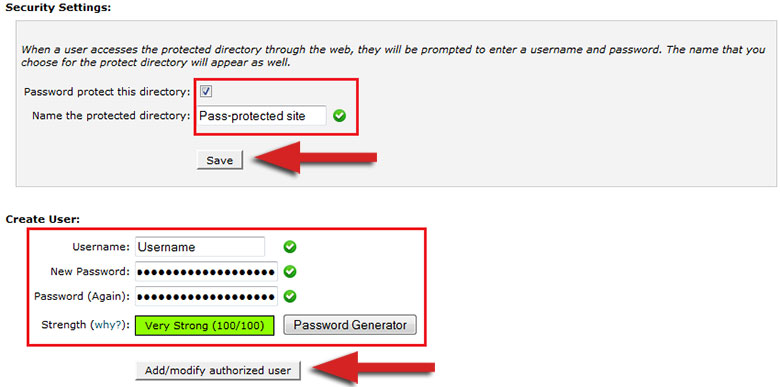

4. After that, check the box next to Password protect this directory, and give the directory a name. Press Save and then go back (there’s usually a link that sends you back) to create the user.

5. Insert a username and a strong password, and then press Add/modify authorized user.

You’re done!

3. Password-protect your website using a plugin

An easier way to password-protect your website and block search engines, is to use a plugin, such as Password Protected or Hide My Site. The second one is a bit outdated, so we’ll just take a look at the first one.

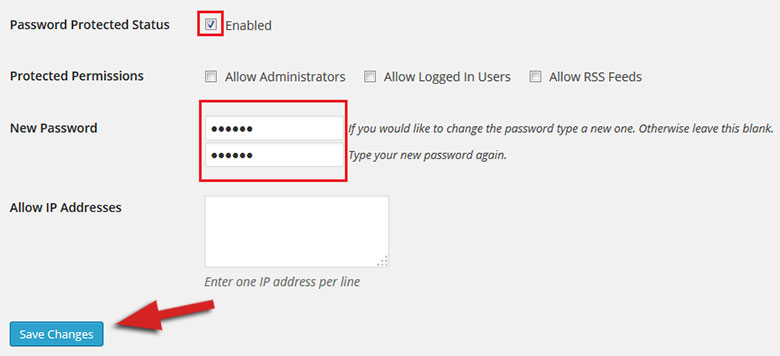

Install the Password Protected plugin and activate it. You can find it under Settings. Here’s how its settings look like:

Check the Enabled box, add a password, click Save Changes and that’s it!

Conclusion

If you wish to have a nice and clean start, then you should definitely use one of those methods to block search engines from crawling and indexing all the mess that exists before launching your WordPress site.

Hope you enjoyed the post! If you have anything to say or ask, please drop a comment!

This is a valid reason for discouraging your WordPress site but there is another you should really consider: Your site is ment for private or restricted use. Examples; Corportate intranet, some sort of private social network, a fancy private photo sharing network. Im currently building a theme for administrating leases by real estate agents, so this come in handy.

Yes, that’s another valid reason.

Just after writing the post, I stumbled upon someone asking for help in a community. He has a copy of his live site, which he uses for testing. He was worrying about duplicate content, and he was right to be worried.

He said that he blocked search engines using robots.txt, but the site was still appearing. So, this is another reason to password-protect a website; if you are using a copy of your live website for testing and troubleshooting purposes.

I had learn lot of new things while reading your post. Also, it opens up my mind by the possibilities when it comes to search engines. Have you encountered spiders trying to access your website using meta robots?

We’re glad that you enjoyed our post!

Using meta robots should usually stop most web crawlers from indexing your content. I said “most” because some search engines might ignore that, so this option is still not 100% safe.

The simplest and safest way is to use password-protection.